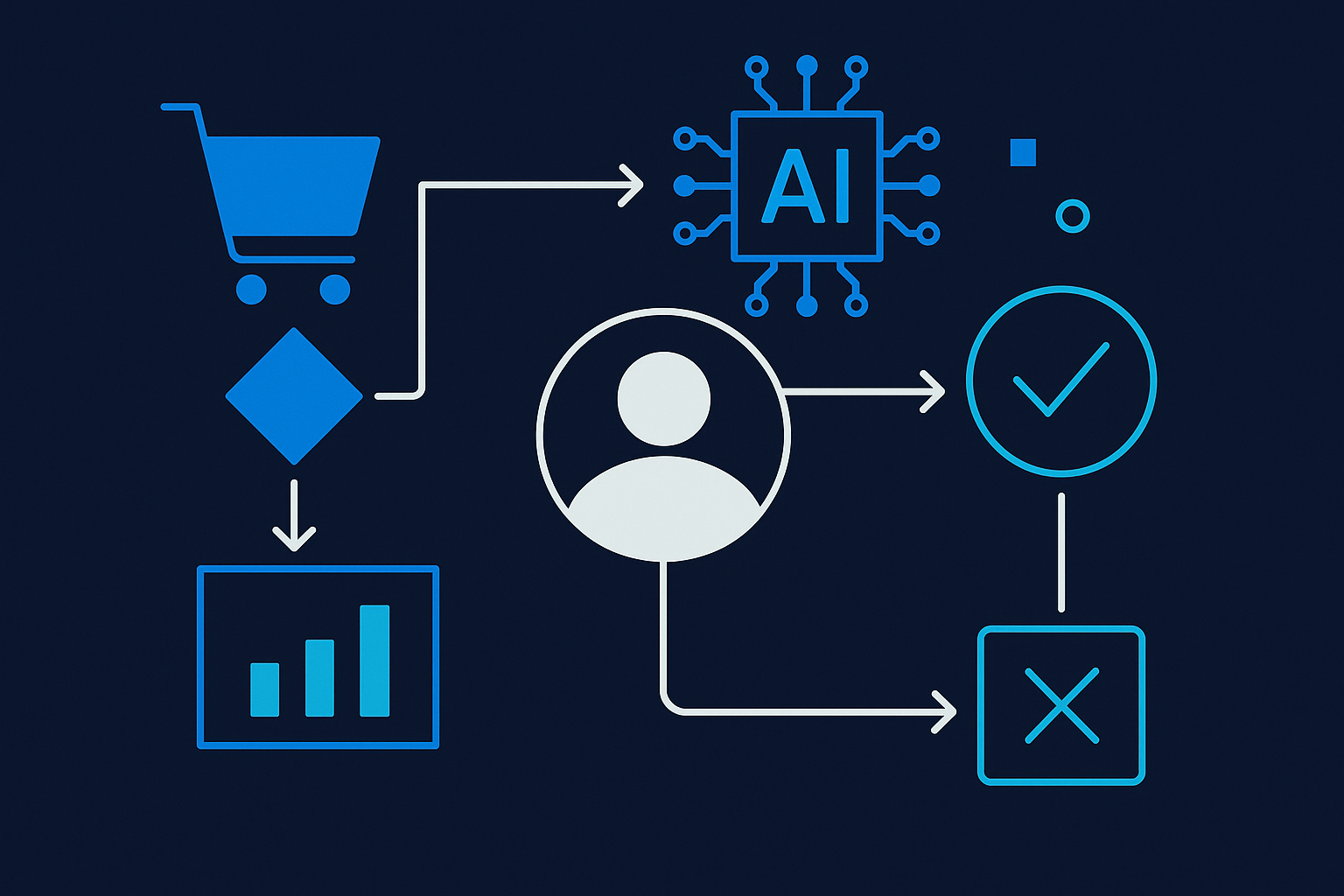

Every retail and CPG company is chasing AI-driven demand forecasting and supply chain orchestration. Almost none of them are asking the right question first: who actually owns the decision the AI is informing?

There's a wave of confidence in retail and CPG right now. Demand forecasting. Supply chain orchestration. Dynamic merchandising. AI-powered customer engagement. The conference circuit is buzzing. The vendor decks are thick.

And most of it is going to fail quietly.

Not because the models are bad. Not because the data isn't there. It's going to fail because nobody sat down and answered one foundational question before they started: Who owns the decision this AI is informing, and what happens when the AI is wrong?

That question sounds simple. It is not.

The language showing up in 2026 retail AI coverage is interesting. Companies are talking about "reengineering decision-making" — not just automating tasks, but restructuring how choices get made across demand planning, inventory positioning, pricing, and customer interaction.

That framing is correct. It's also the part that breaks organizations.

When you automate a task, the accountability stays where it was. A buyer was responsible for a purchase order before. The system generates the PO now. The buyer is still responsible. That's manageable.

When you reengineer a decision, accountability gets blurry fast. The AI flagged low stock. The replenishment system auto-ordered. The regional manager got an exception report nobody reads. A stockout happened anyway. Who owns that?

In regulated environments — food safety, labeling compliance, financial controls embedded in retail operations — blurry accountability isn't just operationally messy. It's a liability.

Large enterprise retailers have armies of people to sort this out slowly. They can afford 18-month AI governance buildouts. Mid-market companies can't, and frankly, they shouldn't need to.

But mid-market retail and CPG operators face a specific version of this problem that the enterprise playbook doesn't solve:

You have fewer layers between the AI output and the operational action. A demand forecast that's wrong at a 10-store regional chain hits differently than the same error at a 2,000-store national operator. The blast radius is proportionally larger. Recovery time is longer. The team is smaller.

This means the stakes of unclear decision ownership are higher, not lower, even though the budgets and governance infrastructure are smaller.

If you're a retail or CPG operator looking at AI investments in demand forecasting, supply chain, or merchandising in 2026, here's the framework I'd run before you sign anything:

1. What decision does this AI actually touch?

Not the use case. Not the demo. The specific decision. "Improve forecast accuracy" is not a decision. "A buyer approves or overrides a replenishment order by Thursday EOD" is a decision. Get that specific.

2. Who owns that decision today, and does that change?

If the AI is informing the decision, the human owner should be clearer than ever — not murkier. If your implementation plan doesn't explicitly name who approves, overrides, and escalates AI recommendations, you don't have an implementation plan. You have a pilot with no off-ramp.

3. What's the failure mode, and who gets the call?

Every AI workflow in operations has a failure mode. The demand model underfits a seasonal spike. The pricing engine makes a decision that contradicts a trade agreement. The inventory system deprioritizes a compliance-sensitive SKU. Someone needs to get the call. Name that person before you go live, not after.

Here's the contrarian take: the retail and CPG companies that win with AI in the next three years won't be the ones with the most sophisticated models. They'll be the ones that designed clean decision ownership into their AI workflows from day one.

Clean decision ownership means faster exception handling, better model feedback loops, and — critically — the ability to actually defend your process when something goes wrong. Whether that's a supplier audit, a food safety inquiry, or a franchisee dispute.

The algorithm is a commodity. The workflow that makes it trustworthy is not.

Practical takeaway: Before your next AI vendor conversation, draft a one-page decision map for the process you're targeting. Name the decision, the human owner, the AI's role (inform vs. recommend vs. execute), and the escalation path. If you can't draft that page, you're not ready to buy software. You're ready for a Blueprint conversation.

Dealing with a similar challenge?

We work with mid-market companies in regulated industries to build AI workflows that actually hold up.

Let's TalkSean Cummings

Founder of Laminar Flow Analytics. Specializes in AI workflow automation for regulated industries — medical device, financial services, and complex logistics operations.